Go to main conference web site:

Latest Conference Information below:

Updated Presentation Program:

http://www.calit2.net/~jschulze/tmp/futureofvr/

Live Streaming:

We are live streaming the presentations in the auditorium

to allow remote participation. The URLs for the video streams are:

Day 1

https://www.youtube.com/watch?v=vc9SPApLu20

Day 2

https://www.youtube.com/watch?v=Li8Z1XjEafo

The presentations will be recorded,

the recordings will be made available after post-processing. Check back here a

week or two after the conference.

Keynote Speakers:

Amir Rubin: Tuesday, 10:45am

|

|

Amir Rubin, Co-Founder and CEO of Sixense,

is a pioneer and visionary in virtual reality and an entrepreneur with over

20 years of experience building companies and developing products in the

fields of VR, simulation, video games, and motion tracking. Sixense is shaped around Amir’s two fundamental tenets

for virtual reality: (1) to deliver the best user experience, the technology

should be transparent to the user, and (2) a platform’s success depends on

its ability to enable application developers. |

David Brin: Wednesday, 10:45am

|

|

David Brin, PhD “Our far-out future? The return of the Village” Virtuality may

provide us with "godlike" powers of visualization. And yet, in many

ways it will bring back many patterns of our ancestors. Bio: David Brin is a scientist,

inventor, and New York Times bestselling author. With books translated into

25 languages, he has won multiple Hugo, Nebula, and other awards. A film

directed by Kevin Costner was based on David’s novel The Postman, with other

works under option. David’s science-fictional Uplift Saga explores

genetic engineering of higher animals, like dolphins, to speak and join our

civilization. In EARTH and EXISTENCE he explores near future trends

that may transform our world. |

Conference Speakers:

|

|

Andrew Allen, Ph.D. Programmer, researcher,

artist UC San Diego |

|

|

Prof. Sheldon Brown UCSD Sheldon Brown holds the

John D. and Catherine T. MacArthur Foundation Endowed Chair in Digital Media

and Learning. He is the Director of the Arthur C. Clarke Center for Human

Imagination and is UCSD Site Director of the NSF Sponsored Center for Hybrid

Multicore Productivity Research (CHMPR). He is the former Director of the

Center for Research in Computing and the Arts (CRCA) and is a Co-PI and

founder of New Media Arts for the California Institute of Information

Technology and Telecommunications (Calit2). In the Visual Arts Department his

undergraduate teaching is in the Computing in the Arts area and with the

Interdisciplinary Computing in the Arts major. His courses focus on the

engagement of real-time computer graphics, media and electronic controls for

installation works. At the graduate level, his teaching is across all

disciplines. His artwork examines relationships between information and

space, which manifest as public artworks, and installations that combine

architectural settings with mediated and computer controlled elements. Recent

projects include: The Scalable City an interactive game installation, 3D

movie and other artifacts show at venues including the Shanghai MOCA, The

Exploratorium, The National Academy of Science, Ars

Electronica, and many others. StudioLab, 2003

installation at Image/Architecture, Florence Italy; Smoke and Mirrors,

2000-2002 an installation at the Fleet Science Museum, and a touring

environment; Istoria, a series of sculptures; Mi Casa es Tu

Casa/My House Is Your House, 1997 - 2000, a networked virtual reality

installation between the National Center for the Arts in Mexico City and the

Children's Museum of San Diego; In the Event, 1995, at the Seattle Center Key

Arena, Seattle WA, 60ft. x 8 ft. x 2 ft., 28 video monitors, 9 computers,

video disk, 3 live feeds, 70 cast aluminum panels; The Video Wind Chimes,

1994, at the Yerba Buena Center for the Arts, San Francisco, CA, four video

projectors, electronic controls, aluminum, plastic; and Apparitions, 1994, a

virtual reality environment, at the University Art Gallery at UCSD. Brown has

received awards and fellowships from the National Endowment for the Arts, The

National Science Foundation, The Rockefeller Foundation, the Seattle Arts

Commission, the Hellman Foundation, the Asian Cultural Council, AT&T

Foundation, Intel Corporation, IBM, nVidia, Sony,

Silicon Graphics Inc., Sony Corporation, and others. He has previously been

on the faculty of the School of the Art Institute of Chicago and the Kansas

City Art Institute. His current work, Istoria, is a

set of tableau sculptures, developed with visualization software he is

developing through a residency at the Institute for Studies in the Arts at

Arizona State University. |

|

|

Thomas A. DeFanti, Ph.D. Research Scientist Qualcomm Institute Thomas A. DeFanti is an internationally recognized pioneer in

visualization and virtual reality technologies. As a leader in the

development of next-generation networks to advance science, DeFanti has also overseen a multitude of innovations in

the area of computer networks. DeFanti

received a B.A. in Mathematics from Queens College in 1969, a M.S. in

Computer Information Science from Ohio State University in 1970, and here

three years later in 1973 a Ph.D. in Computer Information Science. He did his

PhD work under Charles Csuri in the Computer

Graphics Research Group. For his dissertation, he created the GRASS

programming language. In 1973, he joined the faculty

of the University of Illinois at Chicago. In the next 20 years at the

University, DeFanti has amassed a number of

credits, including: use of EVL hardware and software for the computer

animation produced for the Star Wars movie. With Daniel J. Sandin, he founded the Circle Graphics Habitat, now known

as the Electronic Visualization Laboratory (EVL). DeFanti

contributed greatly to the growth of the SIGGRAPH organization and

conference. He served as Chair of the group from 1981 to 1985, co-organized

early film and video presentations, which became the Electronic Theatre, and

in 1979 started the SIGGRAPH Video Review, a video archive of computer

graphics research. DeFanti

is a Fellow of the Association for Computing Machinery. He has received the

1988 ACM Outstanding Contribution Award, the 2000 SIGGRAPH Outstanding

Service Award, and the UIC Inventor of the Year Award. |

|

|

Serafin

Diaz, VP Engineering “Computer Vision at

Qualcomm R&D” |

|

|

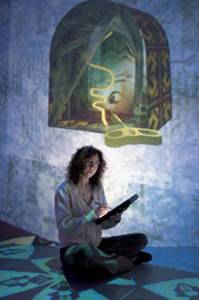

Prof. Margaret Dolinsky: Margaret Dolinsky is

among the most notable artists creating Virtual Reality works in the final

years of the twentieth century” [Jacqueline Ford Morie,

PhD thesis 2008 SmartLab at the University of East

London] |

|

|

Dr. Yoan Eynaud (Post-doctoral scholar) and Clinton Edwards

(Staff biologist), Scripps Institution of Oceanography

“Widening our view of the

reef: How 3D reconstruction might change coral reef ecology" The use of virtual reality

associated technologies in marine ecology is growing rapidly. The Sandin Lab at the Scripps Institution of Oceanography is

using 3D digital imaging to explore coral reef benthic dynamics across

multiple gradients of local human impacts and environment factors. Indeed,

understanding how marine organisms interact and compete for space on the

ocean floor is essential if we want to preserve one of the most diverse and

productive ecosystems on earth. Using individual islands as replicates across

the Pacific Ocean, we are tracking the fate of reefs over time. Thousands of

individual high-resolution images of the reef floor are combined using

Structure from Motion to form 3D and 2D mosaics. These biological maps

provide an interactive view of the reef allowing us to track the fate of

individual organisms at a landscape scale, and thus gain an explicit

understanding of the rules that govern reef dynamics. In this presentation,

we will describe how we use 3D and 2D reconstruction of the reef for both

research and outreach purposes. |

|

|

Name: Stephen

Guerin Title: CEO,

Simtable Faculty,

Santa Fe Institute CSSS Talk Title:

Full Circle AR: coupling projected interactive surfaces to crowd-sourced

reality capture Summary: Simtable

develops interactive physical sandtables for

emergency response leveraging agent-based models wildfire, flood, hazmat and

traffic. I will discuss recent development efforts of our LiveTexture

platform for crowd-sourced capture of animated point clouds of realtime events along with a live demonstration of the Simtable. |

|

|

Ed Helwig,

Research Scientist/Developer at Livermore Software Technology Corporation. “Industry

Potential for Virtual Reality Tomorrow” - Virtual Reality with Virtual

Engineering as a future industry trend to enhance design and decision making. |

|

|

Jeffrey Johnson Jeffrey is a geospatial

software engineer with 15+ years of experience building and delivering

applications for the web. Jeff is a developer with a broad range of skills

who can work at any level, from writing code and fixing bugs to managing

complex projects and making architectural decisions while coordinating

technical policy with corporate strategy. He spent several years working with

international civil aviation authorities on early versions of AIXM an

emerging standard for the interchange of aviation data developed jointly by

FAA and Eurocontrol and NGA. Jeff is also deeply

involved with the City of San Diego’s Open Data and Civic Technology

initiatives. He is a graduate of Humboldt State University where he studied

Geography, Cartography, Geology and Geospatial Technology. |

|

|

Prof. Falko

Kuester Calit2 Professor for Visualisation and Virtual Reality Associate Professor,

Department of Structural Engineering Associate Professor,

Department of Computer Science and Engineering Director, Calit2 Center of

Graphics, Visualization and Virtual Reality (GRAVITY) Director, Center of

Interdisciplinary Science for Art, Architecture and Archaeology (CISA3) Jacobs School of

Engineering, University of California, San Diego Dr. Kuester

received an MS degree in Mechanical Engineering in 1994 and MS degree in

Computer Science and Engineering in 1995 from the University of Michigan, Ann

Arbor. In 2001 he received his PhD from the University of California, Davis

and currently is the Calit2 Professor for Visualization and Virtual Reality

at the University of California, San Diego. Dr. Kuester

holds appointments as Associate Professor in the Departments of Structural

Engineering and Computer Science and Engineering and serves as the director

of the Calit2 Center of GRAVITY (Graphics, Visualization and Virtual Reality)

and Director of the Center of Interdisciplinary Science for Art, Architecture

and Archaeology (CISA3). |

|

|

Prof. Thomas E. Levy Thomas Evan Levy is

Distinguished Professor and holds the Norma Kershaw Chair in the Archaeology

of Ancient Israel and Neighboring Lands at the University of California, San

Diego. He is a member of the Department of Anthropology and Judaic Studies

Program, and leads the Cyber-archaeology research group at the Qualcomm

Institute, California Center of Telecommunications and Information Technology

(Calit2). Elected to the American Academy of Arts and Sciences, Levy is a

Levantine field archaeologist with interests in the role of technology,

especially early mining and metallurgy, on social evolution from the

beginnings of sedentism and the domestication of

plants and animals in the Pre-Pottery Neolithic period (ca. 7500 BCE) to the

rise of the first historic Levantine state level societies in the Iron Age

(ca. 1200 – 500 BCE). A Fellow of the Explorers Club, Levy won the 2011

Lowell Thomas Award for “Exploring the World’s Greatest Mysteries.” Levy has

been the principal investigator of many interdisciplinary archaeological

field projects in Israel and Jordan that have been funded by the National

Geographic Society, the National Endowment for the Humanities, National

Science Foundation, and other organizations. Tom also conducts ethnoarchaeological research in India. Levy, his wife

Alina Levy and the Sthapathy traditional craftsmen

from the village of Swamimalai co-authored the book

Masters of Fire - Hereditary Bronze Casters of South India. Bochum: German

Mining Museum, 2008). Tom has published 12 books and several hundred

scholarly articles. Levy’s recent book is entitled Historical Biblical

Archaeology – The New Pragmatism (London: Equinox Publishers, 2010 that in

2011won the ‘best scholarly book’ from Biblical Archaeology Society

(Washington, DC). Levy and his colleague Mohammad Najjar

won Biblical Archaeology Review’s ‘Best BAR Article’ for “Condemned to the

Mines: Copper Production & Christian Persecution.” His most recent book

is: Levy, T.E., M. Najjar, and E. Ben-Yosef, eds.

2014. New Insights into the Iron Age Archaeology of Edom, Southern Jordan -

Surveys, Excavations and Research from the Edom Lowlands Regional Archaeology

Project (ELRAP). Los Angeles: Cotsen Institute of

Archaeology Press UCLA He is Co-PI on the NSF

IGEERT $3.2 million grant entitled “Training, Research and Education in

Engineering for Cultural Heritage Diagnostics (TEECH). Levy directs the UC

San Diego Levantine and Cyber-Archaeology Laboratory and is Associate

Director of the Center of Interdisciplinary Science for Art, Architecture and

Archaeology (CISA3) at UC San Diego’s Qualcomm Institute – California

Institute of Telecommunications and Information Technology (Calit2). Tom was

recently elected Chair of the Committee on Archaeological Policy (CAP) of the

American Schools of Oriental Research (ASOR). |

|

|

Peter Otto is an expert in

the language and aesthetics of musical and media expression, and also

accomplished in advanced hardware/software design and engineering, including

instrumentation and facilities design, systems and networking applications,

and a wide array of media technology research and development areas.

Classically trained in musical performance and composition, he completed his

graduate work at California Institute of the Arts in Los Angeles in 1984, and

continued there on faculty for several years. His vitae

includes long associations with seminal figures Morton Subotnick and Luciano Berio, as

well as studies and collaborations with Pulitzer Prize winners Mel Powell and

Roger Reynolds. He currently holds appointments at UCSD as Technology

Director on the Faculty of Music and as Director of Research &

Development in the Sonic Arts R&D group at UCSD's CalIT2, established in

2009. As an educator he is a founding faculty member and advisor to UCSD

Music's highly regarded Interdisciplinary Computing and the Arts Major

(ICAM), a program which has produced top performers in the nation's most

advanced digital media industries and leading universities. As a hardware

designer he invented the first digital audio workstation control surface (Waveframe's Contact MIDI Panel), designed the

hardware-based spatial audio system TRAILS, and more recently designed audio

systems for CalIT2 (StarCave, HiperWall

and other systems). Audio and music facility credits include CalIT2's Spatial

Audio Lab (Spatlab) and collaborative designs for

CalIT2's Black Box and Digital Cinema Theatres, and new systems and studios

at UCSD Music's new Prebys Music Center

(Experimental Theatre and other systems). Other design work includes advanced

research projects in high-definition multi-channel audio streaming and

production systems, most notably for CineGrid, a

networked ultra-high-definition digital cinema R&D consortium. Research

sponsors and collaborators include SkySound (LucasArts), Qualcomm, Inc., Cisco, Meyer Sound Labs,

National Institutes of Health, HMC Architects, CineGrid,

Walt Disney Productions, NTT, Biamp, Google, Comhear, Kyocera, NASA, NSF, Cubic, Harman, DTS, and

others. In software design, Otto has written software for diverse

applications in multi-channel and spatial audio, including binaural and

multi-channel sound design environments and utilities, and a variety of

spatial audio imaging packages. An entrepreneur, he has founded two software

companies and consulted for top tier firms in the private sector. His

performance design work has been heard in major American, European and Asian

venues such as Carnegie Hall, Juilliard, Los Angeles Philharmonic, SIGGRAPH,

Theatre Olympics (Japan), The Holland Festival, Foundation Maecht (Fr.), Santa Cecilia (Italy), Barbican and Royal

Albert Halls (London), Ars Electronica (Austria),

and many others. |

|

|

Arnaud Paris, VR Supervisor

for VideoStitch: |

|

|

Prof. Ravi Ramamoorthi Director, UC San Diego

Center for Visual Computing Professor CSE Department ;

affiliate in ECE University of California,

San Diego News: I started here at

the University of California, San Diego CSE@UCSD on Jul 1, 2014, moving from

UC Berkeley. My goal is to build a world-leading graphics and vision group at

UCSD. (See launch of new UC San Diego Center for Visual Computing with

newspaper article at UT San Diego, UCSD TV Computing Primetime on Visual Computing

and earlier UCSD News Release on my appointment). |

|

|

Patty Rangel Producer, VR Geek, Artist,

Writer and Speaker |

|

|

Jason Riggs Founder / CEO at OSSIC Engineering leader with 20

years experience in loudspeaker & headphone

R&D in both engineering and leadership roles. I enjoy creating

innovative solutions to complex problems, and thinking outside the box. I

excel at user-centered design innovation for audio products. I am passionate

about building great audio products and companies. |

|

|

Jared

Sandrew Chief

Creative Officer @ Legend3D 2D

to 3D / VR live-action |

|

|

Stuart W. Volkow Commercial Trends in VR and AR The convergence of affordable technologies is

creating a new industry with diverse categories. 2016 is a tipping point year

for mass awareness of VR experiences, the emergence of a viable consumer

market, and practical educational and industrial applications. Market growth

and acceptance will be slowed by conflicting standards, confusing hardware,

and a shortage of quality content. After

a long gestation period of over 20 years, Virtual Reality and Augmented

Reality has arrived as a sustainable consumer medium

in a variety of forms. The milestone sale of Occulus

Rift to Facebook for $2 Billion (reportedly $400 M in cash) has set the

stage. Technological improvements, including 4K lightweight displays,

improvements in graphics and video processing chipsets, excellent

micro-mechanical gyroscopic 3D tracking and odometry,

low cost, high-fidelity depth cameras, and gesture control interfaces, have

come together to create practical, affordable, immersive systems.

Applications to immersive worlds and gaming are obvious starting points for

appropriate titles. Virtual tourism, sports, journalism and immersive

storytelling may become mainstream even faster than

gaming. Industrial growth markets will include design, engineering, medicine,

and field servicing of complex equipment.

|

Demonstrations in Atkinson Hall’s Black Box Theater:

Allen Yang, UC Berkeley: Various demonstrations from the

Yang laboratory.

Amir Rubin, Sixense: STEM. Sixense’s new, modular wireless controller for

entertainment and scientific visualization applications.

Ching

Lee, UCSD: Perspective Puzzle, uses Oculus Rift and

game pad for interaction.

David Nuernberger, UCSD: VR

Rock Climbing. Uses Play Station and Oculus Rift to augment a

real rock climbing experience with a fictitious environment.

Eric, VideoStitch: Live

streaming of panoramic stereo video from a GoPro rig to an Oculus Rift.

Helen Situ, NextVR: High end

panoramic 3D video viewed with the Samsung Gear VR.

John Mangan, UCSD: Virtual

Reality demonstration from Prof. Kuester’s GRAVITY

laboratory at UCSD.

James Strawson, UCSD: Virtual

Reality demonstration from Prof. Kuester’s GRAVITY

laboratory at UCSD.

Jonathan Lin, UCSD: Sorting Objects. Uses

Oculus Rift, Leap Motion and Ring Mouse to move items from one place to

another.

Michael Hess, UCSD: Virtual Reality demonstration from

Prof. Kuester’s GRAVITY laboratory at UCSD.

Philip Weber, UCSD: CalVR. 3D

models, stereo panoramas, point clouds and other data rendered with Prof.

Schulze’s laboratory’s CalVR virtual reality engine.

Stephen Guerin, Simtable:

Projections onto a sand pit allow augmented reality scientific visualization of

terrain data and fire simulations.

Steven McCloskey, UCSD: Nano VR. Oculus Rift and Razer

Hydra controlled by Unreal Engine improve understanding of phenomena in the nano world.

Saurabh Goyal, SDSU: A virtual exploration of the air quality in

National City. Uses an Android phone with a Google

Cardboard-style viewer for low cost visualization of information relevant to

asthma patients.

Demonstrations at Nearby Venues:

StarCAVE at

Atkinson Hall: 34 HD projectors generate a 360 degree surround VR image, driven

by 18 high end graphics PCs networked with 10 Gbit/sec.

Trish Stone will present various projects from the past few years. Presentation

times: 12-2pm Tue+Wed only.

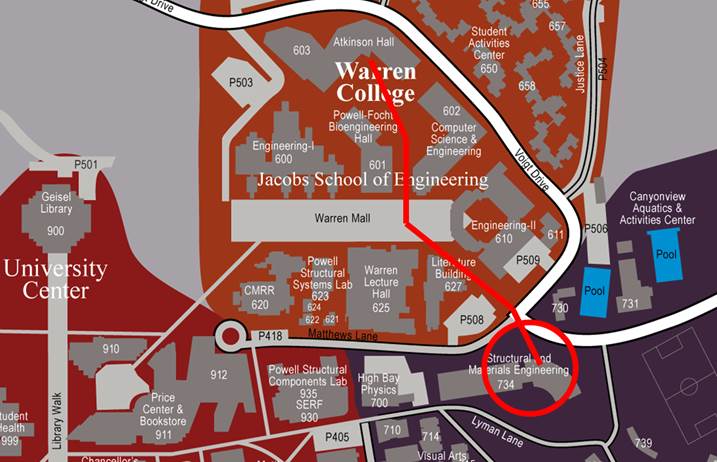

WAVE at SME building: 35 narrow bezel displays show

passive stereo images driven by a cluster of 19 high end graphics PCs networked

with 40 Gbit/sec. Christopher McFarland will present

various projects from the past few years. Presentation times: 12-2pm Tue+Wed only.

Walking directions to the WAVE lab: leave Atkionson

Hall towards the south, walk past the bear leaving it on your left hand side, bear

slightly left at Warren Mall, cross the street, SME building is now on your

right, swimming pools are on your left. Enter SME building through side door to

the left of the main entrance. Door will be open.